Changelog

AI Gateway: LLM request logs and Langfuse integration

The AI Gateway now gives you full visibility into your LLM requests — both in the Aptible UI for day-to-day troubleshooting and via log drain integrations for long-term compliance and observability.

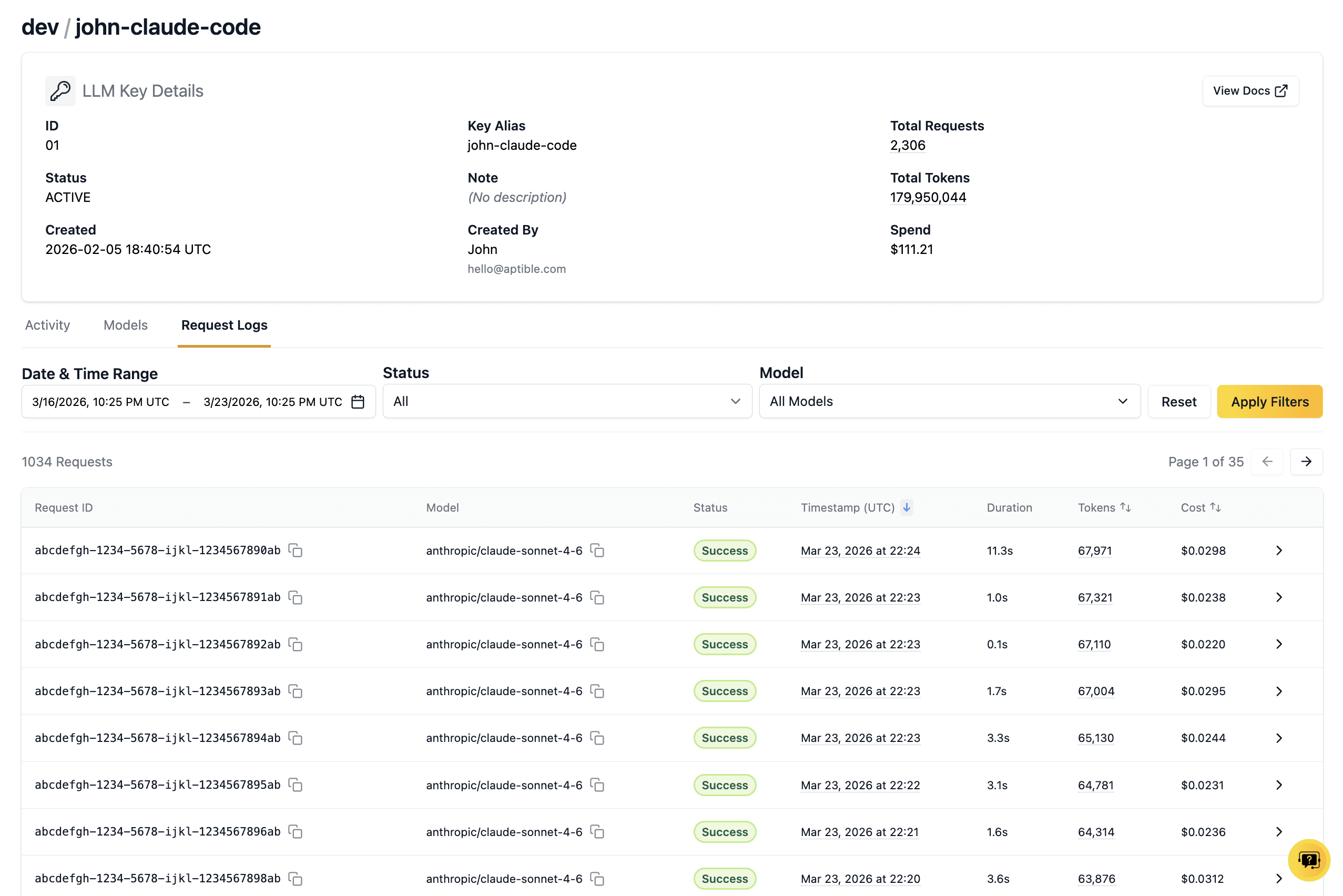

View request and response logs in the UI

You can now view the full contents of your LLM requests and responses directly in the Aptible UI. From the key details page, click into any request to see the complete request and response payloads. Use this to verify your gateway configuration, refine prompts, and troubleshoot issues without leaving the product.

Stream logs to Langfuse

You can now configure a Langfuse log drain from the Aptible UI to send your full LLM request and response logs to Langfuse for prompt management, observability, and analysis. LLM trace drains are configured at the environment level in the Integrations tab.

A note about Anthropic models

We now support accessing Anthropic models through Bedrock, e.g. bedrock/anthropic.claude-sonnet-4-6 instead of anthropic/claude-sonnet-4-6. In the near future, we plan to fully transition to only supporting Anthropic models through bedrock/anthropic — please update your existing Anthropic usage accordingly.

Introducing Point-in-time Recovery for PostgreSQL databases

In our effort to keep making it easier to maintain a rigorous disaster recovery posture on Aptible, we've added Point-in-time Recovery (PITR) capabilities for Aptible Managed PostgreSQL databases, at no additional charge.

PITR is enabled by default for all PostgreSQL databases (with a few exceptions noted in our docs, like EOL versions or databases with backups disabled). Some databases must be restarted in order to (automatically) enable PITR; for other databases, PITR is already enabled. To check whether PITR is enabled for any of your PostgreSQL databases, refer to the Backups column on the Databases list view, or the Point-in-Time Recovery attribute on any database's detail page.

To restore any PITR-enabled database to a specific point in time in a new database, visit the Recovery tab on that database's detail page, and provide: a name for the new database, and the timestamp to which you'd like to restore. Currently, we limit PITR restores to timestamps in the last 24 hours. If your use case requires restoring to a point in time older than 24 hours, please let us know in Slack or via a support ticket.

If you have any questions about how PITR works or how to use it, check out our docs on PITR!

Platform Improvements: March 2026

Activity: more visibility, better filtering

Activity now includes operations for deleted resources, with full drill-down into historical events

Added read-only activity tracking (e.g., sensitive access events), available via the Show Read-Only filter

Activity events can now be streamed to your SIEM or logging provider for centralized monitoring, alerting, and compliance workflows

Improved table experience, including the ability to customize visible columns

Updated operation naming in UI for clarity. For example:

membership.create→ Role Add Usermembership.destroy→ Role Remove Userpermission.create→ Role Add Permissionpermission.destroy→ Role Remove Permission

Activity can now be filtered by text-based search

Automated activities now clearly identified as automated

Container Efficiency (formerly scaling recommendations)

Scaling recommendations are now presented as Container Efficiency

“Scale Down” → Over-provisioned

“Scale Up” → Under-provisioned

Added a tooltip to view usage stats and rationale

View Container Efficiency for App Services here and Databases here

Performance improvements

Fix for

log_drain:deprovisionImproved performance for orgs with large resource counts

Faster git-based deploy initiation

Improved performance when checking app existence before deploy

Faster search in the Aptible web app

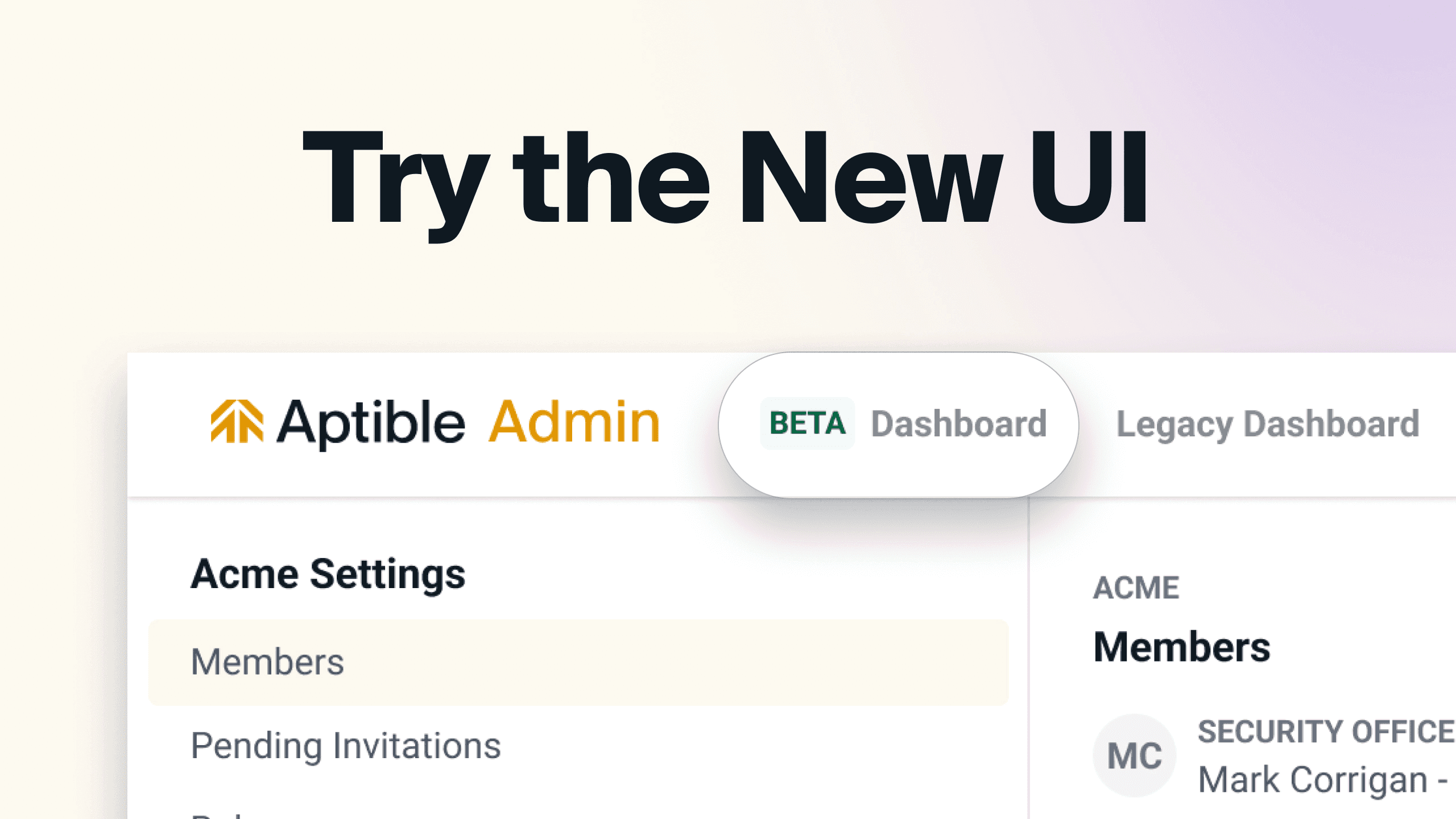

UI Home (Open BETA)

Updated Compliance widget:

PCI is now visible for all customers

PIPEDA is now visible for customers with Canadian dedicated stacks

AI Gateway: More granular spend data and request history

The AI Gateway now offers more granular spend data and key request history to help you understand and optimize your LLM usage.

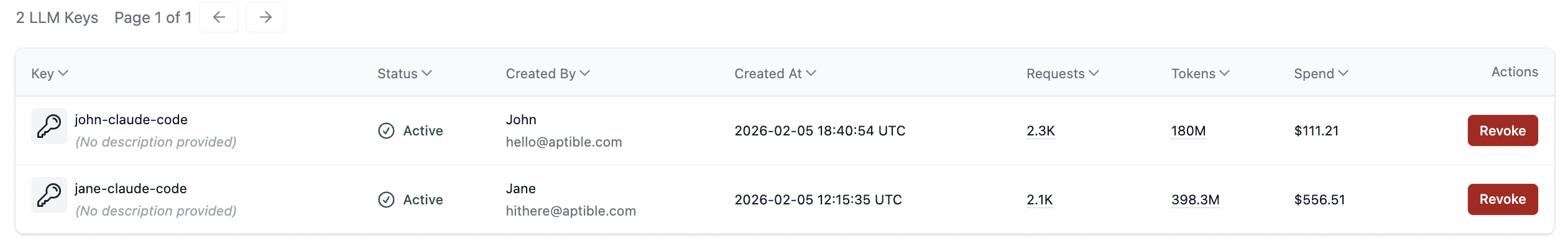

Spend data by key

In the keys list and the key details page, you can see the spend during the current usage period for each key. Use this to understand which keys are most active, and which use cases might benefit from cost optimization.

Request history and spend data by request

In the key details page, you can see all the requests made by the key in the last 7 days, including token usage and cost information.

Coming soon: view the contents of your LLM request and response logs to help with prompt refinement and troubleshooting.

AI Gateway model access policies and cost visibility

Aptible AI Gateway (beta) now includes model access policies and cost visibility.

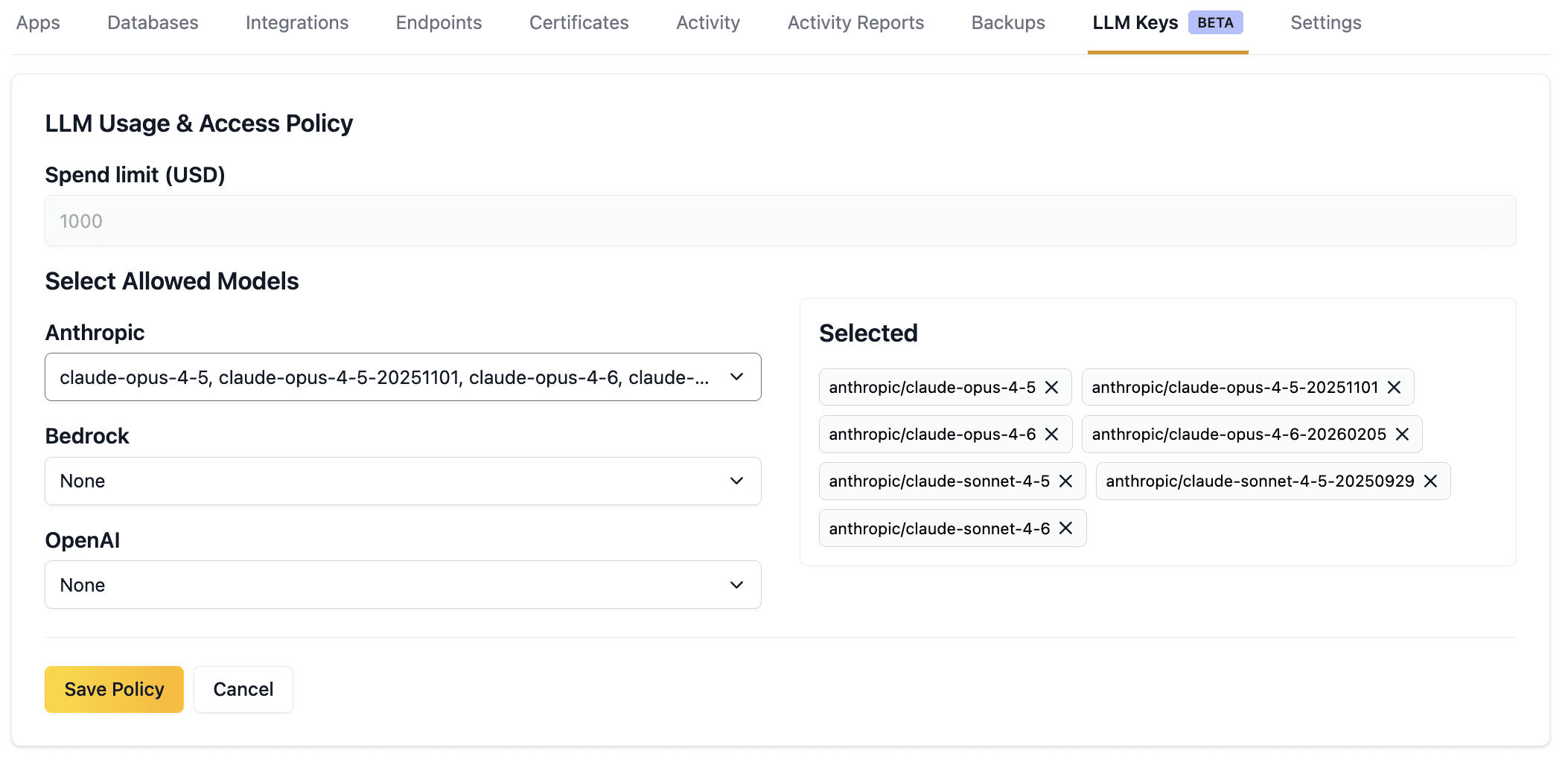

Access Policies

Use model access policies to ensure that your applications and developers are using the right model for the job. Model access policies are set at the environment level and apply to all keys within that environment.

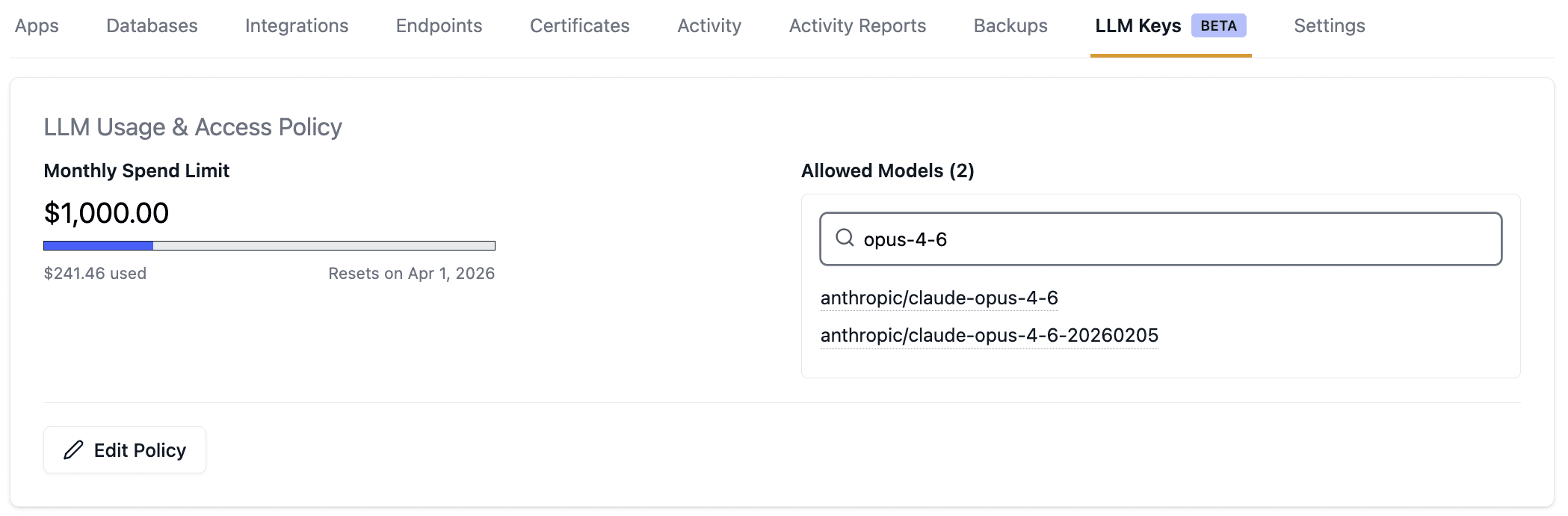

Cost Visibility

View LLM usage at the environment and global level to understand your spend and remaining budget for the month. Coming soon: view costs at the key and request level to understand and optimize more granular usage.

Aptible AI Gateway is currently in beta. Contact Aptible Support to join.

Introducing: Comprehensive, easily queryable Activity data

Based on feedback, we've made major improvements to our Activity page (video demo):

Comprehensive data - All platform actions are captured, now including auth events (successful/failed logins, role changes, and more) and deleted resources

Unlimited lookback - Going forward, the Activity page supports unlimited lookback, compared to the previous 7-day limit

Improved search/speed - Multi-select filters (by user, time range, activity type, or environment) and 5x faster responses on average

Try it out here: https://app.aptible.com/activity

Read the docs here for more information on activity.

We’re continuing to build on this, with CSV export, full-text search, API docs, and SIEM integrations coming soon. We’d love your feedback! Share thoughts in the thread here or upvote/submit ideas on our public roadmap.

Support for Elasticsearch 8 & 9

We are happy to announce that Elasticsearch versions 8.17 through 9.2 are now available on Aptible. These versions introduce a significant number of enhancements and features to ElasticSearch, including improvements to performance, vector search, and semantic modeling. Since this is a significant upgrade from our previously offered version of Elasticsearch 7.10, we recommend that users review the Elasticsearch release notes closely for compatibility.

Additionally, due to the lack of open-source versions between Elasticsearch 7.10 and 8.17, we will not be able to offer any in-place database upgrades at this time. We advise customers to provision new databases or use ElasticSearch’s snapshot and restore API (such as the S3 repository plugin) to transfer indices to a newer version.

Other releases

Improvements to the new UI Home page - currently in Open BETA

Recently Viewed widget: New widget on the Home dashboard to see recently viewed resources.

Last Deploy widget: New widget on the Home dashboard to see your last deploy app, the status of the deploy, and commit data.

VPC Peering requests (creations and deletions) can now be requested in the UI by account owners

Introducing LLM Gateway (open BETA)

This feature is part of our Managed AI initiative, designed to make it easier to deploy, run, and scale AI applications with Aptible. Need something else for your AI apps? Let us know.

We've launched a beta of our new LLM gateway product, which gives you an easy way to use LLMs with built-in compliance features. You can generate LLM keys through the Aptible UI, and your usage will be HIPAA-compliant through BAA coverage for models, automatic audit logging, data encryption, and no PHI retention for model training.

If you're interested in joining the beta, contact Aptible Support

Support for PostgreSQL 18 and more

PostgreSQL 18

We are happy to announce that PostgreSQL 18 is now available on Aptible. PostgreSQL 18 introduces several new features and enhancements, such as a new asynchronous I/O system for certain operations like vacuum, and improved locking performance for queries that access multiple relations. Additionally, there’s a significant number of smaller improvements associated with this release, so we recommend reading through the official release notes for PostgreSQL 18. Please reach out to Aptible Support if you have any questions.

New Open BETAS

Point-in-time Recovery for PostgreSQL: Point-in-time recovery (PITR) for PostgreSQL is now available in public BETA! Check out the docs here and contact Aptible Support if you're interested in enabling.

Home page in Aptible Dashboard: We've launched a new home page for the Aptible dashboard in BETA. We plan to continue iterating and making changes here over the next few weeks so we can showcase the most relevant information to you. Want access or have feedback? Contact Aptible Support!

Other releases:

New cross-region copy region for

ap-southeast-2: Database backups taken within theap-southeast-2region will now be copied toap-southeast-4, going forward, when the environment's cross-region copy setting is enabled. This update allows for continuous compliance with Australian data requirements.Improved Scaling Recommendations data: We've updated our in-app scaling recommendations to include more data, allowing you to better understand the recommendations being made.

Fix - Failing/incomplete loading for in-app metrics: We released a fix for an issue that occasionally caused failure or incomplete loading of in-app metrics for services with many containers.

Support for InfluxDB 1.11/1.12 and RabbitMQ 4.1/4.2

InfluxDB 1.11 and 1.12

We are happy to announce that InfluxDB 1.11 and 1.12 are now available on Aptible. As the first public releases of InfluxDB v1 since 2021, these versions include multiple enhancements and bug fixes, which can be reviewed in InfluxData’s changelog.

These database versions are available now and can be provisioned through the UI and CLI. If you’re looking to upgrade an existing instance of InfluxDB v1, please reach out to Aptible Support.

RabbitMQ 4.1 and 4.2

We are happy to announce that RabbitMQ 4.1 and 4.2 are now available on Aptible. These feature releases include performance improvements as well as some minor enhancements. You can read more about each version in the release notes:

These database versions are available now and can be provisioned through the UI and CLI. If you’re interested in upgrading an existing RabbitMQ database to one of these versions, please reach out to Aptible Support.

Other releases

MySQL Updates:

New Minor Version Available: MySQL 8.0.44 and 8.4.7 are now available

MySQL replicas are now read-only by default

Restoring a backup of a MySQL replica will disable replication by default to give users more control over the recovery process.

Invoices: Invoices are now available in our main UI. Previously, they were only accessible through our legacy dashboard.

Tip: You can now give access to users other than Account Owners with the new Billing Only Role.

New Search Tags: You can now filter resources using new search tags:

Database and Services page:

scaledownrec:true,scaleuprec:true- to see where scaling down or up is recommended.Services page:

autoscaling:true- to see a list of services with autoscaling enabled.

Managed Certs Bug Fix: Managed certificates will no longer appear in the custom certificate dropdown

Support for Redis 7.2, 8.0, and 8.2

We are happy to announce that Redis 7.2, 8.0, and 8.2 are now available on Aptible. Each version introduces performance optimizations and new capabilities to improve the efficiency, usability, and security of Redis databases. You can learn more about each version in Redis’ official documentation, which we’ve linked below for convenience.

Introducing Billing-Only Role

A new Billing-Only Role is now available in all accounts. This role lets you grant team members access to billing-related tasks—such as viewing and managing invoices and payment methods—without adding or modifying access to any Aptible resources.This makes it easy to:

Provide your Finance team with billing access without granting access to any resources: apps, databases, or environments

Give a developer visibility into invoices without changing their resource access

Only Account Owners can add or remove users from the Billing-Only Role. Users in this role cannot invite others or modify role assignment. Visit the Roles page to get started.

Other releases:

Shared Endpoints improvements:

Migrating to, or modifying certain attributes of, a shared endpoint now waits longer for existing connections to close naturally

Endpoints now display whether Shared Endpoints can be enabled. For example, non-ALB endpoints show “Shared Endpoints = N/A” so it’s clear the option doesn’t apply.

You can now also use the

shared:nosearch tag within the endpoints page to see a list of potential shared endpoints. Quick link here

IDLE_TIMEOUTvalue also now sets the HTTP client keep-alive setting for HTTPS Endpoints. Related docs.Autoscaling operations are no longer enqueued while

configureoperations are running.The Database overview table and individual Database details now display whether backups are enabled or disabled.

You can now request a Dedicated Stack to be deprovisioned directly in the Aptible Dashboard. Navigate to the Dedicated Stack’s Settings tab to use this.

Companion Git Repository Deprecation

Aptible will be deprecating Companion Git Repositories starting today, September 15th. Customers will have until November 3rd, 2025 to migrate.

What is a Companion Git Repository, and why is it being removed?

Initially, Aptible supported deploying applications only via Git: customers provided a Dockerfile, and the platform built the app image. In June 2017, we added support for deploying pre-built Docker images. At that time, if you needed to define processes or migrations using a Procfile or .aptible.yml, those files still had to be provided via Git in addition to your image. This pattern of providing configuration files via Git is known as a Companion Git Repository. In January 2018, we added support to provide Procfiles and .aptible.yml directly within Docker Images. At that time we indicated that the Companion Git Repository was deprecated, but gave an indefinite timeline for customers to migrate. In order to continue to modernize and simplify our platform, we’re finally taking action to require customers to stop using this deprecated feature.

What impact will this have on me?

Hopefully, none! Fewer than 2% of apps hosted on Aptible use a Companion Git Repository, and even fewer include a Procfile or .aptible.yml. Since there is no functional difference in how these files are provided, migrating off the deprecated method will not affect app behavior. If you’re one of the impacted customers, we’ve already contacted your Ops Alert contact directly and provided them with a list of affected apps.

Starting today, impacted apps will fail to deploy. You can either:

Migrate your app immediately, using the "How do I migrate?" section of our documentation

Temporarily opt-in to continue using the feature by acknowledging the deprecation for each impacted App. See “Using Companion Git Repositories during deprecation” in the documentation here.

On November 3rd, 2025, the opt-in behavior will be removed, and you will be required to migrate any remaining Apps before deploying them again.

Introducing Shared Endpoints

HTTP(S) endpoints can now be configured to share their underlying load balancer with other endpoints on the same stack, reducing the cost of the endpoint by 33% from $0.06/hr to $0.04/hr. The latest versions of the Aptible UI, CLI, and Terraform provider all support creating shared endpoints and converting dedicated endpoints to shared.

The shared endpoint experience is nearly identical to that of dedicated endpoints. As long as all of the clients using the endpoint support Server Name Identification (SNI) (which they should as SNI has been widely supported for over 10 years) and the endpoint does not need to use a wildcard domain to serve multiple domains.

Get started:

Learn more about shared endpoints from our documentation.

Install the latest version of the Aptible CLI.

Future changes:

We plan to make Shared Endpoints the default setting for all new endpoints where applicable. Following this, we plan to roll out the same change to existing endpoints where applicable.

RabbitMQ Prometheus Plugin Now Available

Aptible’s RabbitMQ now enables the Prometheus plugin by default for newly created 3.13 and 4.0 databases. The Prometheus plugin provides access to all RabbitMQ metrics, making it a great option for monitoring and alerting in conjunction with tools like Grafana. The plugin will be accessible through a new set of Database Credentials and will be discoverable throughConnection URLs tab in the UI, Database Endpoints, and Database Tunnels. If you have any questions, please reach out to Aptible Support.

Load Balancing Algorithms for HTTP(s) Endpoints

You can now configure how HTTP(s) endpoints route requests in Aptible via the UI, CLI, and Terraform.

For request routing, we’ve traditionally defaulted to the Round Robin, a straightforward solution that routes requests evenly between containers in a sequential manner. However, for services that had requests of varying complexity, this may not always be the best option as requests can stack up.

With this update, we’re exposing two new options: Least Outstanding Requests and Weighted Random. Least Outstanding Requests routes traffic to containers with the least in progress requests and is great for varied workloads. On the other hand, Weighted Random routes requests evenly between all containers like Round Robin, but in a random order.

You can configure the Load Balancing Algorithm in the UI through a dropdown when you create or edit HTTP(s) endpoints, in v0.24.8 of the CLI using the --load-balancing-algorithm-type flag in the endpoints:https:create and endpoints:https:modify commands, and through the load_balancing_algorithm_type attribute in Terraform. Valid options are round_robin (default), least_outstanding_requests, and weighted_random.

Reach out to Aptible Support if you have any questions.

Restart-free scaling operations for HAS

Scale operations within horizontal autoscaling enabled can be configured to add or remove only the appropriate number of containers to reach the desired running count without restarting all the other running containers in the service.

If you are using horizontal autoscaling on a background worker or job processing type service, you may want to configure it to use the restart-free variant in order to minimize interruptions of jobs in process.

The restart-free scale type may also be helpful if you are using horizontal autoscaling on a web application that can experience sudden traffic spikes, since the restart-free scaling will allow capacity to be added without interrupting all current connections. Additionally, web applications that experience strain on backend resources due to zero-downtime deployments can leverage the restart-free scaling so that horizontal autoscaling does not overwhelm those connected resources.

See the docs on Horizontal Autoscaling here and our updated guide for getting started with Horizontal Autoscaling.

New Default Region for Canadian Cross-Region Backups & New "Stop Timeout" Setting

New Default Region for Canadian Cross-Region Copy Backups:

ca-west-1(Canada West) is now the default region for cross-region copy backups initiated fromca-central-1.New "Stop Timeout" Setting for App Services: "Stop Timeout" can now be configured on a per-service basis, controlling how long service containers are allowed to continue working after the initial SIGTERM, before the hard shutdown. This setting can be configured through the UI, CLI, or Terraform. See the docs here for more information.

April Enhacements - UI and SCIM Improvements

This month, the Aptible team focused on addressing a number of enhancements and bugs reported by our customers while we wrap up some new features (check out our roadmap for more info):

UI:

The Stacks and Environments UI pages now show "Total estimated monthly costs"

A Datadog site can now be selected for metric drains via the UI

Container ports for TCP and TLS endpoints can be updated via the UI

SCIM Improvements:

Enhanced SCIM compatibility with Microsoft Entra and Ping Identity

Improved error response messages for better feedback to Identity Providers

March Enhancements - Operation Logs, Terraform, CLI, and more

This month, the Aptible team focused on addressing a number of enhancements and bugs reported by our customers:

Operation Logs:

Better differentiate the output of container logs from platform logs in operations. For example, the output of your migrations during

before_deploysteps, or the output from your container if it is not passing release health checks

Terraform Provider:

Destroying an Environment now will properly handle (deprovision) any log drain created by Aptible for the purposes of stream logs via the CLI with

aptible logsWhen endpoints, databases, or replicas are created but fail to provision, they are now kept in Terraform state so that attempting to re-apply will replace them (destroy and create a replacement), preventing users from having to clean up outside of Terraform.

CLI:

Fixed log streaming for Backup restores when restored to a different stack than the original database.

Added additional metadata about the Stack in the JSON output of

environment:listAdded additional metadata about the container profile in JSON output of

db:list

Deploy to Aptible Github Action:

Updated CLI version

UI:

The Stack overview page now has the ability to sort columns (i.e. sort by costs)

MySQL 8.4 Support

We are excited to announce the release of MySQL 8.4 on Aptible, the first Long-Term Support (LTS) release of MySQL. As an LTS version, MySQL 8.4 releases will focus on necessary fixes, minimizing the risk of breaking changes between updates. You can read about the changes introduced in MySQL 8.4’s What Is New and about the reasoning behind MySQL’s new versioning model in their blog post.

If you want to upgrade or have questions about MySQL 8.4, please contact Aptible Support.

CLI Version 0.24.3 & Autoscaling Updates

CLI Updates:

In an effort to improve the CLI and better understand how customers are using it we have added telemetry to our commands. This telemetry data is being sent to an internal system that we are already using throughout our platform, but users might see an extra request being sent to

tuna.aptible.comwhen a command is being run.We have significantly improved performance of our commands that list resources (e.g.

environment:list,apps,db:list,endpoints:list,log_drain:list, andmetric_drain:list)

Install the latest version of the CLI here.

Other releases improvements:

Autoscaling Update: The

Post Release Cooldownscaling policy configuration will now always ignore this period of time following a release in the metrics used to evaluate scaling, even if it would be included in the current lookback. You can now use this setting to ignore spiky CPU/memory usage on service startup without having to tune your lookback. The default has also been lowered from 5 minutes to 1 minute. Note: This setting is available on both Horizontal and Vertical Autoscaling.

Exclude Databases from Automatic Backups via Terraform

Databases (including Replicas) can now be excluded from future automatic backups via Terraform. Previously, this setting was only available in the Aptible Dashboard.

To exclude a database from the backup retention policy, therefore preventing new automatic backups from being created, use the backup_retention_policy block and set enable_backups to false.

CLI Version 0.24.1 Released

CLI Updates:

--envoption: All commands now accept--envas an alias for--environment.Update Container Profile using

app:scale: Theaptible app:scalecommand now allows you to update the container profile without changing the container size or number of containers.Container Profile included in

aptible services: Theaptible servicescommand now includes the container profile, in addition to container size and count.Default Behavior for

backupcommands: Thebackup:listnow lists all backups instead of defaulting to a one-month lookback. Thebackup:orphanedcommand also lists all orphaned backups instead of defaulting to a one-year lookback.

New version of CLI available here for download.

Other recent enhancements/releases:

PostgreSQL 12 + RabbitMQ 3.12 are now marked EOL

Added operation log URLs across multiple locations—including operation headers, disconnection messages, and operation logs themselves—ensuring users can easily locate logs in the UI even if your client crashes, connection fails mid-operation, or you want to quickly share the logs without copy/pasting hundreds of lines

Horizontal Autoscaling based on CPU for App Services

Horizontal Autoscaling (HAS) for App Services is now generally available, expanding our autoscaling functionality alongside Vertical Autoscaling for App Services (available on the Enterprise plan).

When this feature is enabled, the number of containers for a given App Service will be automatically scaled based on CPU usage. This ensures cost efficiency and performance—without the need for manual scaling, saving engineering time while improving app reliability.

This feature is available on Dedicated Stacks on the Production and Enterprise plans. Note: you can upgrade or modify your plan anytime directly in the "Plans" page in the Dashboard.

See Horizontal Autoscaling docs here including our guide for configuring Horizontal Autoscaling here.

Activity Filters, Improved Cost Estimates, and Ask ChatBot

Activity Filters

Activity can now be filtered by Resource Type, Operation Type, and Operation Status, making it easier to find operations quickly!

Other recent improvements/releases:

Cost estimates in UI now reflect custom pricing rates

"Ask Chatbot" option when submitting a support ticket

RabbitMQ 3.13 and 4.0 Support

We’re happy to announce that RabbitMQ 3.13 and 4.0 databases are now available on Aptible. If you’re interested in upgrading an existing RabbitMQ database, please reach out to Aptible Support for help.

Automatic User Provisioning via SCIM

We are excited to announce that SCIM (System for Cross-domain Identity Management) is now available on the Production and Enterprise plans.

SCIM facilitates automated user provisioning and deprovisioning and extends to group provisioning and linking to existing groups, significantly reducing the manual effort involved in user and group management while minimizing potential security risks. This enhancement equips our customers with robust user and group management capabilities, enhancing security and streamlining operations throughout their teams.

To start using this feature, see our SCIM Implementation Guide.

Container Right-Size Recommendations

Container right-sizing recommendations are now shown in the Aptible Dashboard for App Services and Databases.

For each resource, one of the following scaling recommendations will show:

Rightsized, indicating optimal performance and cost efficiency

Scale Up, recommending increased resources to meet growing demand

Scale Down, recommending a reduction to avoid overspending

Recommendations are updated daily based on the last two weeks of data, and provide vertical scaling recommendations for optimal container size and profile. Use the auto-fill button to apply recommended changes with a single click!

To begin using this feature, navigate to the App Services or Database page in the Aptible Dashboard.

TLSv1.3 Support

TLSv1.3 is now available for HTTPS and Legacy ELB Endpoints, bringing additional security to your Apps. You can enable TLSv1.3 by configuring SSL_PROTOCOLS_OVERRIDE according to our documentation.

Postgres 17 Support

We are happy to announce that PostgreSQL 17 is now available on Aptible. PostgreSQL 17 introduces several updates, such as new JSON-related features and performance improvements across multiple key processes like vacuum and WAL processing. You can learn more in PostgreSQL’s official release post.

New Billing System

As of earlier this month, we began migrating customers to our new billing system, introducing several enhancements designed to improve visibility and simplify tracking costs.

Accumulated Usage: You can now see your usage as it accumulates throughout the month. The Draft Invoice displays your usage so far, and you can drill down further to view detailed usage by day and line item.

Projected Monthly Costs: We’ve relocated projected invoice information to the Stack and Environment pages, where it’s now labeled as “Estimated Monthly Costs.” This change aims to provide more contextual insight where it matters most.

Invoice History: The Invoice History page will show your current and future invoices on the new billing system. For access to historical invoices, simply click the link at the top of the billing portal.

PDF Invoice Downloads: You can now download invoices directly to PDF from the UI, making it easier to share and archive your billing records.

Improved Speed: Invoices now load significantly faster!

For more details, visit the billing dashboard or reach out to our support team if you have any questions.

Simplified Plans: Development, Production, Enterprise

We’re excited to announce that we’ve streamlined our plans to better meet the needs of organizations at different stages of growth. Our plans have been simplified from Starter, Growth, Scale, and Enterprise to just three tiers: Development, Production, and Enterprise.

One key difference with these new plans is that we've removed scaling limits. Initially, we introduced limits to help customers keep costs under control as they grew. However, we’ve learned that these restrictions were often more of a hindrance than a help. In response, we’ve eliminated these limits, allowing you to scale without boundaries. Instead, we’ve focused on giving you greater visibility into costs so you can manage your infrastructure spending effectively as you grow.

If you’re currently on the Starter, Growth, or Scale plans—your plan will remain as is, and no action is required. However, if you'd like to explore the new Development or Production plans, you can upgrade at any time. For more details and to review specifics, visit our pricing page.

Vertical Autoscaling for App Services

Vertical Autoscaling is now generally available on the Enterprise plan!

Keep your apps right-sized effortlessly with Vertical Autoscaling, which automatically adjusts container profiles based on real-time CPU and RAM usage. Learn more in our docs.

Zero-Downtime Deploys for gRPC Apps

You can now add gRPC endpoints to your gRPC apps, which enable zero-downtime deploys.

For more information please check the documentation

Zero-Downtime Deploys for Services without Endpoints

You can now force a zero-downtime deployment strategy for services without endpoints that leverages either a simple uptime healthcheck or Docker's healtcheck mechanism to ensure your services stay up during deployments.

For more information please check the documentation

InfluxDB 2.7 Released

We’re happy to announce that InfluxDB 2.7 is now available on Aptible. InfluxDB 2.X introduces many new tools to visualize and process your data alongside Flux, InfluxData’s data scripting language. You can read about these changes and more in InfluxDB’s release blog post. If you are interested in upgrading an existing InfluxDB database, please contact the Aptible support team for assistance.

Additionally, for those who prefer SQL-like queries over Flux, we have no plans to deprecate InfluxDB 1.8 at this time. We intend to continue making the older version available as a part of our managed database offering until InfluxDB 3.0 OSS is released or any security concerns arise.

Environment and Stack Cost Estimates

Estimated monthly costs are now shown for environments and stacks in the Aptible Dashboard! The estimates reflect the cost of running the current resources for one month. It is updated automatically as resources are added or scaled to reflect the new estimated monthly cost. Please note: it does not represent your actual usage for the month (ongoing scaling operations or deprovisioned resources are not reflected).

Success and Failure hooks

As an expansion to before_release, add the following hooks within your .aptible.yml to set up better automation around app lifecycle events on Aptible:

before_deploy(renamed frombefore_release.before_releaseis now deprecated, but will continue to work)after_deploy_successafter_restart_successafter_configure_successafter_scale_successafter_deploy_failureafter_restart_failureafter_configure_failureafter_scale_failure

Improved Resource Scaling Options

We released a set of changes to our clients (UI, CLI, and Terraform) to make it easier for users to provision Apps and Databases with the desired scaling options. Previously, this had to be accomplished in two steps. For example, previously in the CLI, if a user was creating a PostgreSQL Database using our RAM Optimized container profile and 4000 IOPS, they would have to:

Create the database

aptible db:create(which defaults to our General Purpose container profile and3000IOPS)Then use the web UI to immediately scale the resource

As you can read, this is a disjointed user experience and we are happy to announce that we have feature parity across all of our clients.

Aptible CLI

Users can now provide scaling options (container profile, container count, container size, disk size, or IOPS) upon creation of an App or Database via the CLI.

Now all of these options can be provided in a single command:

aptible db:create --container-profile r --container-size 1024 --iops 4000

The same changes also apply to:

aptible db:replicateaptible backup:restore

The same changes also apply to deploying an

Appfor the first time:aptible deploy --container-profile r --container-size 1024 --container-count 2

Users can now provide

--container-profiletoaptible apps:scaleandaptible db:restart

New version of CLI available here for download.

Aptible Terraform

Users can now provide disk IOPS for Database and Replica resources

We improved the performance of scaling an App resource

Aptible UI

When creating a Database resource, users can now provide scaling options (container profile, disk size, container size, container profile, and IOPS)

New Default Backup Retention Policy

As of 07/25/2024, all new Environments will have a default backup retention policy of:

30 daily backups

12 monthly backups

6 yearly backups

Cross-region copies disabled

Keep final backups enabled

This configuration still maintains 6 years of backups, as the previous default did, but reduces the overall number of backups retained over that period by over 6x, saving a significant amount on backup costs within a few months of databases coming online.

We've also added the backup recommendations in-app to make it easier to optimize existing environments.

Backup Retention Policy management in Terraform

Backup Retention Policies can now be managed via Terraform.

The backup_retention_policy block can be used to minimize cost or extend retention for Terraform-managed environments. Additionally, keep_final backups can also be disabled via Terraform; this allows forterraform destroy environments to work more efficiently as you no longer have to manually clean up final backups within the CLI or UI.

Database End-Of-Life Policy Updates

Database versions without active developer or community support will now be marked as (EOL) in the Dashboard. Additionally, after a 90-day period, EOL databases will be marked as (DEPRECATED) and will no longer be provisionable through the UI or CLI.

Following this policy, you will be unable to provision the following databases on October 31, 2024:

Redis 6.0 and below

PostgreSQL 11 and below

MySQL 5.7 and below

Elasticsearch 7.9 and below

MongoDB 3.6 and below

RabbitMQ 3.9 and below

CouchDB

Notably, MongoDB 4.0 and Elasticsearch 7.10 will continue to be offered indefinitely, and CouchDB will no longer be available for new provisions on October 31st.

While this will not affect the functionality of any currently deployed databases, we encourage folks using EOL databases to upgrade to take advantage of new features and security measures introduced in later releases. We’ve included a short FAQ below, but if you have any questions regarding this new policy, please reach out to the support team.

FAQ:

Q: Will this affect my ability to backup, restore, or replicate databases on EOL or deprecated versions?

A: No, you will still be able to interact with existing databases in the same manner as before. However, new product features or improvements may not be compatible.

Q: I urgently need to provision a specific database version that is no longer available on Aptible. Can I have an exception?

A: Please get in touch Aptible Support with your specific request, and we’ll work with you to find an alternative solution.

Q: How can I identify my databases running on end-of-life versions?

A: You can search for "EOL" in the dashboard to filter for databases running on end-of-life versions.

Operation Blocking Improvements & New SSH Banner

Operation Blocking Improvements

Our users have given us feedback that certain operations blocking each other can really slow them down at critical moments. Based on that feedback, we've implemented improvements to operations which allow you to work more effectively. Specifically, this includes:

Deploy/scale operations no longer block SSH sessions: Users can now SSH into an app while a deployment or scaling operation is ongoing. This change helps for quick debugging.

Database backups no longer block database operations: Database operations, such as scaling, will no longer be blocked by automatic backups. This change helps for more efficient database management.

Services within a given app can be scaled simultaneously: You can now scale multiple services within a given app at the same time, without waiting for each to complete. This update enables more effective and flexible service scaling.

New 'Last Deploy Banner' in SSH Sessions

With the new ability to SSH into an app during a deployment, we have updated our welcome banner for SSH sessions. The new banner displays:

The date and time of the last completed deploy operation

The source and reference for the deployed code

Role Management Improvements

We made some changes to the user experience for Role Management. Functionally, roles and permissions have not changed, just the way users interact with roles has been updated:

Users can now more easily view permissions within an Environment, App, and Database. Within a detail page, click the “Settings” tab and you will be able to see all the permissions associated with that entity.

In the role management page, users can now filter by Role, User, and Environment.

We have condensed the role management view to make it easier to navigate and find exactly what you are looking for.

We also now provide an export to CSV button that will print the roles, members, environments, and permissions currently filtered.

We have also condensed the permission editor and streamlined making changes in an effort to make it easier to manage permissions.

New Container Recovery Log Message

Aptible will now display a container recovery initiated message when a container has restarted due to an event outside of its normal lifecycle. This change helps better differentiate between container restarts due to application deployment and a container recovery event.

VPN Tunnel updates

Over the next several weeks, the Aptible team will be migrating VPN Tunnels to new appliances to ensure continued and reliable service. During this time, our team will be reaching out to coordinate the migration.

Once the migration is complete, additional VPN Tunnel details will now be available in the UI. More specifically, VPN Status will now be shown for more effective monitoring/troubleshooting.

Read more about managing VPNs and their status here in our docs.

If you'd like to schedule a migration to the new appliance ahead of time, please contact our support team!

CLI Version 0.19.9 Released

New commands for managing backup retention policies via the CLI

Environment Backup Retention Policies can now be managed via the Aptible CLI. Use aptible backup_retention_policy to get the current policy for an Environment and aptible backup_retention_policy:set to change the policy.

Improvements to config:get command

aptible config:get can now be used to get a single value from an App's Configuration.

Dashboard App Dependencies Improvements

Previously, the "Dependencies" tab when viewing an App's details on app.aptible.com would only show Aptible Databases that could be detected from the App's configuration (environment variables). Now, it can detect dependencies on other Aptible Apps by comparing domain names detected in the App's configuration with the domains associated with Endpoints. While this method of dependency detection cannot find every dependency an App has, we believe it is a positive step toward making everyone's Aptible architecture easily discoverable.

Introducing Sources

We have introduced a new “Sources” page—designed to track what code is deployed where by linking your apps to their source repositories.

This integration allows for a comprehensive view of deployed code across your infrastructure. You can navigate the Sources page to identify groups of apps sharing the same code source and drill into the Source Details for insights into current and historical changes of code deployed.

TLS 1.0/1.1 Deprecation

To enhance our platform security, Aptible is enforcing a minimum TLS version of TLSv1.2 for all Aptible APIs and sites starting 5/1/2024. Support for TLSv1.0 and TLSv1.1 will be discontinued at this date.

This affects Aptible’s own APIs and sites, including:

Your App Endpoints and Database Endpoints are unaffected by this change.

All modern browsers and operating systems natively support TLSv1.2 already, however if your client is using TLSv1.0 or TLSv1.1, then you must update your client to use TLSv1.2 to continue using Aptible APIs and sites listed above.

For more information on TLSv1.2 compatibility, see this documentation.

RabbitMQ 3.12 Released

We are happy to announce that Aptible now supports provisioning RabbitMQ 3.12 databases.

MySQL Minor Version Release 8.0.33

We’ve released a minor version update for MySQL: 8.0.33. This will automatically apply upon the next restart or reload of your MySQL databases.

Configuration Changes

Below are the listed changes that you should be aware of as they are a change in behavior for the current configuration of MySQL.

The SSL Cipher is changing from

DHE-RSA-AES256-SHA256to the new default ofTLS_AES_256_GCM_SHA384TLS 1.0 and 1.1 are no longer supported as of this version. Only TLS 1.2 and 1.3 are available.

If you have any questions or concerns, please contact our Support Team.

New Backup Settings: Yearly Backups & Exclude DB from Backups

Yearly Backups

Aptible now supports Yearly automatic backups.

For new and existing environments, the retention policy will be set to 0. We highly recommend reducing the frequency of daily and monthly automatic backups when implementing yearly backups as a cost-optimization measure.

See our docs for more information on automatic yearly backups

Exclude DB from Backups Setting

We've introduced a per-database setting which allows for the ability to exclude that database from new automatic backups. Please note: this does not automatically purge previously taken backups.

Postgres 15 and 16 are now available on Aptible

Aptible is releasing PostgreSQL versions 15 and 16 as a part of our managed database service offering.

Please make note of the following significant changes related to this release:

Aptible will not offer in-place upgrades to PostgreSQL 15 and 16 because of a dependent change in glibc on the underlying Debian operation system. Instead, the following options are available to migrate existing pre-15 PostgreSQL databases to PostgreSQL 15+.

Upgrade PostgreSQL with logical replication

contribimages are being consolidated. Previously, we have maintained two separate PostgreSQL images: acontribimage that included commonly requested extensions and a standard image with only a few critical extensions likepglogical. Starting with PostgreSQL 15, we will no longer maintain a separate image. Instead, we will be bundling extensions into our standard PostgreSQL image. If there is an extension you’d like to see added, please reach out to the team through our support portal.

Platform Improvements: New EC2 hardware, CPU Shares, and maintenance commands

The Aptible team has performed maintenance on all customer accounts, which has allowed us to implement the following platform improvements:

Next generation of EC2 hardware: We’ve migrated “General Purpose” containers to the next generation of EC2 hardware for overall better performance

Implemented CPU Shares and deprecated CPU Isolation (formerly CPU limits): Up until now, customers with

CPU Isolation: Disabledhave been able to take advantage of additional CPU resources beyond their allocated limit due to traditional CPU Limits not being enabled and the occasional scheduling of containers on larger infrastructure hosts, which allowed for additional CPU usage. We are transitioning to a CPU-share model with right-sized host matching to improve system efficiency and predictable performance. To provide a practical example, a given app could be running a single 4GB container on a 16GB infrastructure host by itself due to several contributing factors. This container would be allocated a single full CPU but could exceed that by up to 4x (400% CPU utilization). With this new model, this container will be rescheduled on a host closer in size to the container. When the container overruns its single full CPU allocation and reaches the limits of the host’s CPU capacity, it would be throttled to ensure the stability of the host and, by extension, the service. This necessary change lays the groundwork for some exciting upcoming changes, including autoscaling.Maintenance Commands: We have added two new Aptible CLI commands to simplify further the process of ensuring the required App and Database restarts are complete:

aptible maintenance:appsandaptible maintenance:dbs. These commands list Apps and Databases that are required to be restarted to complete any outstanding maintenance and allow customers to track which resources will be restarted by Aptible's SRE time at the indicated maintenance window. Please upgrade your Aptible CLI now to version 0.19.7 or newer to use these commands.Improved API Performance

Reset 2 Factor Authentication

Account Owners can now reset a user's 2FA in the Members page under Settings. Account Owners can reset 2FA for all other users, including other Account Owners, but cannot reset their own 2FA. Once the Account Owner kicks off the reset, the selected user will receive an email with a link asking that they confirm and complete the 2FA reset.

New Dashboard: Endpoints page, Database management improvements, and more

We're excited to share further improvements to the new Aptible Dashboard, which is currently in Beta. The latest additions:

A new Endpoints page to view and manage all Endpoints within an organization

A new support request form directly via the Dashboard — built with smart suggestions

Apps and Databases can now be restarted via the Dashboard

Databases can be migrated to a new region with the

Restart Database with Disk Backup and RestoresettingsInfluxDB 2 Metric Drains are now supported via the new Dashboard

Environment Variables are now viewable and configurable via the Dashboard

Bug fixes

Introducing the new Aptible Dashboard: New navigation, per-resource pages, improved metrics, and more

We’re excited to announce a preview of our new Aptible Dashboard, which is currently in Beta. With this new Dashboard, we aim to improve our platform's navigation, speed, and usability — focusing on the overall developer experience.

We’re just getting started, but to kick things off, you can try the new Dashboard here with the following features:

An entirely fresh new look to the Dashboard

A new navigation with per-resource pages to hone in on the resources you are looking for, including dedicated pages for Stacks, Environments, Apps, and Databases

A new Activity page to easily view and manage operations, including operation logs

A new Deployments page to manage deployments triggered via the Dashboard

Omni-search for resources across the entire organization

In-app metrics now persist after deploys and restarts

In-app metrics now show a 1-week look back (in addition to hourly and daily) with more granularity than ever before

Redis 6.2 Release

Aptible now supports Redis 6.2. To upgrade your Redis database, please see our guide for “How to upgrade Redis”

CPU Limit Metric

Aptible will now include a “CPU Limit” metric in the metrics delivered through metric drains so users can understand app and database container performance better.

In the past, Aptible only offered the General Purpose container profile. Since this was standard across all containers, users could easily calculate the CPU limit based on the fixed CPU-to-RAM ratio. The release of RAM and CPU-Optimized container profiles, each with unique CPU-to-RAM ratios, introduced a new need for a CPU Limit metric.

You can compare the existing CPU Usage metric with the new CPU Limit metric to monitor when your app or database containers are nearing their CPU limit, and scale accordingly. However, if CPU Isolation is disabled, the container will have no CPU limit, and the CPU Limit metric will return 0. If you have not enabled CPU Isolation, we highly recommend doing so, as you may experience unpredictable performance in its absence. If CPU Isolation is enabled, the metric returns the allocated CPU per millisecond. For example, a CPU limit of 1 in the dashboard will be reflected as 1000 by the metric, or a CPU limit of .5 in the dashboard will be reflected as 500 by the metric. Please contact Aptible Support if you have any questions before the release.

Please note: as part of this change set, it became necessary to change the host tag to host_name for referencing the Container Hostname (Short Container ID).. Any pre-built queries on customer metrics platforms which rely on the host tag may need to be changed to reference host_name instead.

Reliability Team Operation Notes

Operations completed by the Aptible Reliability Team will now include a note indicating reason the operation (for example: maintenance restarts).

IP Filter Limit Increased

The maximum amount of IP sources (aka IPv4 addresses and CIDRs) per Endpoint available for IP filtering has been increased from 25 to 50. If you've created multiple Endpoints as a workaround in the past, you may want to consider consolidating back to one Endpoint.

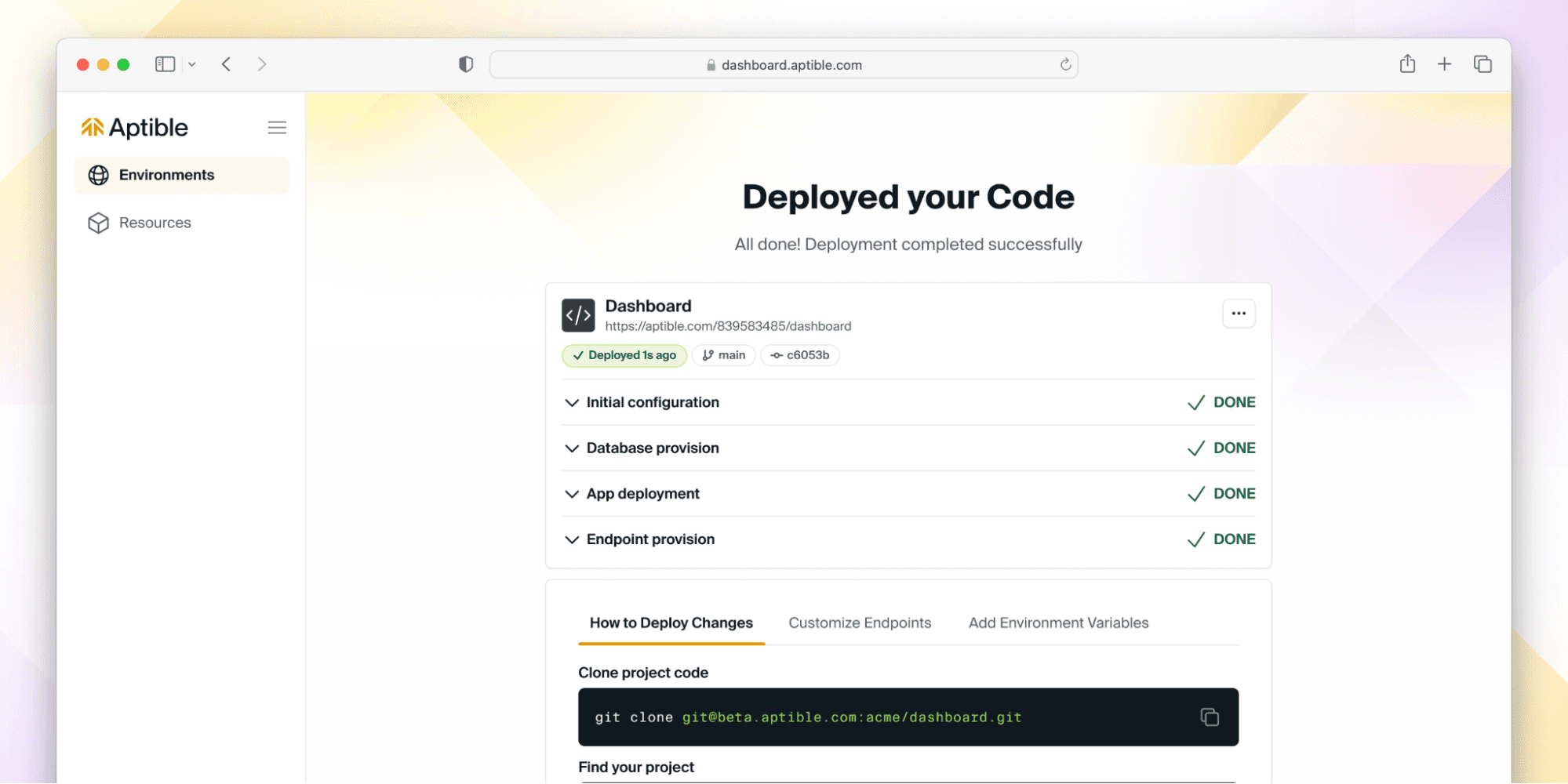

Deploy Code from Dashboard

This year, we announced that we are refocusing our efforts with the goal of delivering ✨magical experiences✨for developers. To deliver on that mission, we created an entirely new experience within the Aptible Dashboard, which allows users to easily deploy code to a new environment with the necessary resources. Whether you're just starting out on Aptible or a seasoned power user, we want to continue to enable you to have a seamless deployment process.

Without further ado, we are happy to introduce you to the new Deploy Code button with the Aptible Dashboard.

The Deploy Code process will guide you through the following steps:

Setup an SSH Key: Authenticate with Aptible by setting up your SSH key (if you haven't done so already)

Create a new environment: Set up a new environment where your resources will reside

Select app type: Choose the type of app you want to deploy, whether it's from our starter templates, the Aptible demo app, or your own custom code

Push your code: Deploy your code to Aptible using a

git pushCreate databases (optional): Create and configure managed database(s) for your app

Set and configure environment variables (optional): Customize your app by setting and configuring variables

Set Services and Commands (optional): Fine-tune your app's services and commands

View Logs: Track the progress of your resources as they deploy, and if something goes wrong, you edit your configuration and then rerun the deployment – all within the Dashboard

And voilà - your code is deployed! ✨

But don’t take our word for it - give it a try here, and if you have ideas or feedback - let us know! We are actively iterating on this flow, so we’d love to hear from you about what you’d like to see next.

Granular Permissions & Deleting Custom Roles

We are excited to announce Granular Permissions for fine-tuning user access on the Environment level! Formerly, Aptible had a simple read/write permission scheme, but as part of this release, we've introduced 2 new read permissions and 6 new write permissions, which can be assigned using Custom Roles. Read the docs here or read our blog post.

Modifying a Custom Role

Deleting Custom Roles Custom Roles can now be deleted within the Aptible Dashboard. You can do this by navigating to the Custom Role you would like to delete, then navigating to the Settings tab.

Deleting a Custom Role

Support for IKEv2 VPN Tunnels

We are excited to announce we've updated our site-to-site VPN tunnel implementation. This update comes with support for IKEv2 VPN tunnels for greater reliability and security, as well as improved compatibility with Azure-based connections.

Learn more about setting up VPN tunnels here.

To request an existing VPN tunnel be migrated to IKEv2, contact Aptible Support.

Support for SSH Key ED25519 Algorithm

For improved compatibility and security, we've added ED25519 to the supported SSH key algorithms we accept. You can now generate ED25519 SSH keys. You can manage your keys here within the Aptible Dashboard.

Manage Environments, Modify Container Profiles, Look up Stacks - all via Terraform

aptible_environment resources can now be managed through Terraform. Learn more here about resource attributes and configuration.

Modify Container Profiles via Terraform container_profile can now be modified for App services and Databases through Terraform. This can be used to select a workload-appropriate Container Profile for a given service: General Purpose, CPU Optimized, or RAM Optimized. Learn more here about resource attributes and configuration.

Look up Stacks via Terraform aptible_stack data sources are now available through Terraform. This can be used to look up Stacks by name. Learn more here about resource attributes.

Provision Metric Drains and pre-built Grafana dashboards via Terraform

aptible_metric_drain resources can now be managed through Terraform. Learn more here about resource attributes and configuration.

Pre-built Grafana dashboards and alerting You can now use the aptible/metrics Terraform module to provision Metric Drains with pre-built Grafana dashboards and alerts for monitoring RAM & CPU usage for your Apps & Databases. This simplifies the setup of Metric Drains so you can start monitoring your Aptible resources immediately, all hosted within your Aptible account!

App metrics in the pre-built Grafana dashboard

Database metrics in the pre-built Grafana dashboard

Alert rules in the pre-built Grafana dashboard

Retain your logs in the event of a disaster with S3 Log Archiving

With our new S3 Log Archiving functionality, you can now configure log archiving to an Amazon S3 bucket owned by you! This feature is designed to be an important complement to Log Drains, so you can retain logs for compliance in the event your primary logging provider experiences delivery or availability issues.

By sending these files to an S3 bucket owned by you, you have the flexibility to set retention policies as needed - with the security of knowing your data is encrypted in transit and at rest. Decryption is handled automatically upon retrieval via the Aptible CLI.

Learn more about setting up S3 Log Archiving. When you're ready to finalize the setup, contact Aptible Support and provide the following information:

Your AWS Account ID

The name of your S3 bucket to use for archiving

Manage Log Drains via Terraform & Terraform Endpoint Bug Fix

You can now manage aptible_log_drain resources through our Terraform Provider. Learn more here about resource attributes and configuration.

Terraform Endpoint Bug Fix Feedback suggested by the Terraform Provider claimed that Endpoint placement could change (e.g. external to internal) but it cannot without a destructive operation (e.g. destroy and recreate).ForceNew now occurs on Endpoint placement changes in the Aptible Terraform Provider. This will result in an Endpoint being destroyed and then recreated.

Increased Scaling Sizes within the Dashboard, New Renaming Commands & Improved Terraform Error Messages

We've updated the Aptible Dashboard, so all supported Disk and Container sizes are available for scaling. Previously, the Aptible CLI supported more scaling sizes than the Aptible Dashboard.

New Renaming Commands You can now rename apps, databases, and environments via the Aptible CLI using these new commands:

aptible environment:rename Learn more

aptible app:rename Learn more

aptible database:rename Learn more

Previously, this could only be done by the Aptible Support team.

Improved Terraform Error Messages The Aptible Terraform provider will now return more informative error messages from the server (for example, validation errors or other informative errors the Aptible backend may return) with a status code and message. Previously, errors were unclear by pointers returned by the client, making it impossible to read the backend errors themselves with no status code or message.

Downloadable Operation Logs

Prior to today, operation logs could only be accessed in real-time via the Aptible CLI, while an operation was running. This made debugging difficult in a number of scenarios:

Terraform operations, for which logs are not captured

CI-initiated jobs disconnected due to a CI service issue

Manual operations inadvertently disconnected via Ctrl-C

Manual operations initiated from the Aptible Dashboard

Today, we have released support for downloading logs for completed operations from the Aptible Dashboard or CLI, and also for attaching to real-time logs via Aptible CLI by providing the operation ID.

When you navigate to an App or Database in the Aptible Dashboard and view the Activity tab for that resource, you'll see a log download icon to the right of the timestamp:

Using the Aptible CLI, you can follow the logs of a running operation:

aptible operation:follow OPERATION_ID

...and view the logs for a completed operation:

aptible operation:logs OPERATION_ID

Rename App and Database Handles via Terraform & Authentication with Environment Variables via Terraform

These can be set with APTIBLE_USERNAME and APTIBLE_PASSWORD environment variables. Learn more here.

Rename App and Database handles To change a handle for an existing resource, simply change the string passed into the handle field for a given Database or App and the change will reflect within Aptible (both UI and CLI). The affected fields are the app handle and database handle.

Faster Scaling for Apps and Databases

We are excited to share Aptible's underlying container scheduler has been radically improved. New EC2 instances provisioned during releases and scaling operations are now quicker and more reliable. Operations that previously took 15+ minutes to launch new EC2 instances will now take less than 3 minutes for the same process (x5 times faster)!

Optimize your infrastructure usage with Container Profiles and Enforced Resource Allocation

We are excited to announce that Container Profiles and Enforced Resource Allocation are now generally available on Aptible. By default, new Dedicated Stacks and all Shared Stacks have Enforced Resource Allocation enabled—meaning CPU Limits and Memory Limits are enabled and enforced for each container.

The new Container Profiles can be found under the Scale menu of your Aptible dashboard, for stacks with Enforced Resource Allocation enabled.

These improvements include new Container Profiles with different CPU to RAM ratios and a range of supported Container sizes, helping you to optimize your costs for different applications. The three types of Container Profiles currently available are as follows:

General Purpose: The default Container Profile, which works well for most use cases.

CPU Optimized: For CPU-constrained workloads, this profile provides high-performance CPUs and more CPU per GB of RAM.

Memory Optimized: For memory-constrained workloads, this profile provides more RAM for each CPU allocated to the container.

Aptible strongly recommends enabling Enforced Resource Allocation on existing Dedicated Stacks which don't currently enforce CPU Limits. Check out our FAQ on CPU Limits for more information about Enforced Resource Allocation!

Additional Database Version Support & Improved Deploy Times

We have released support for additional Redis and Postgres versions. Aptible Deploy is now compatible with Redis 6 and 7 and Postgres 9.6.24, 10.21, 11.16, 12.11, 13.7, and 14.4.

Improved Deploy Times We have fixed a bug that was causing builds to miss the cache more frequently than they should, thereby increasing deploy times. A new release should make it more likely that builds will hit the cache.

If the build continues to be a pain point, we recommend switching to Direct Docker Image Deployment to gain full control over the build process.

Secure Access for Users on Aptible With SSO

We are thrilled to announce that Single Sign-On (SSO) is now available on all Aptible infrastructure at no additional cost. Formerly, this was only available to Enterprise customers.

With SSO, you can allow users of your organization to log in to Aptible using a SAML-based identity provider like Okta and GSuite.

UX improvements when scaling Services and setting up Log Drains

We've added small improvements to the end user experience when setting up Log Drains, and when scaling Services.

Scaling Services

Clicking Scale in a Service now shows a "drawer" with options shown to horizontally or vertically scale your services. The Metrics tab in the drawer allows you to quickly navigate to Container Service metrics to make better informed scaling decisions.

Outside of the drawer experience, the key change is the ability to vertically scale your services to every possible size right up to the instance's maximum allowed limit in the UI. Previously, scaling beyond 7 GB this was only possible through the CLI. In addition, we've made it possible for you to see the CPU share per container based on the enforcements of CPU limits for better predictability in performance.

Setting up Log Drains

While the experience to set up Log Drains is still the same, minor improvements where made to the overall visual design.

Build Docker images faster and increase DevOps productivity with multi-stage builds

Docker images are an essential component for building containers because they serve as the base of a container. Dockerfiles – lists of instructions that are automatically executed - are written to create specific Docker images. Avoiding large images speeds up the build and deployment of containers, thus contributing positively to your DevOps performance metrics.

Keeping image sizes low can prove challenging. Each instruction in the Dockerfile adds one additional layer to the image, contributing to the size of the image. Shell tricks had to be otherwise employed to write a clean, efficient Dockerfile and to ensure that each layer has the artifacts it needs from the previous layer and nothing else, all of which takes effort and creativity, in addition to being error prone. It was also not uncommon to have separate Dockerfiles for development and a slimmed down version for production, commonly referred to as the "builder pattern". Maintaining multiple Dockerfiles for the same project is not ideal as it could produce different results between development and production, making software development, testing and bug fixes unreliable when pushing new code.

Docker introduced multi-stage builds to solve for the above, which Aptible now supports when using Dockerfile Deploys. Please note that users deploying using the Direct Docker Image Deployment method on Aptible could have used multi-stage builds prior to this release.

Using multi-stage builds

With multi-stage builds, you use multiple FROM statements in your Dockerfile. Each FROM instruction can use a different base, and each of them begins a new stage of the build. You can selectively copy artifacts from one stage to another, leaving behind everything you don’t want in the final image.

You can learn more about how to use the FROM instructional statements, naming different build stages in your Dockerfile, picking up from when a previous stage was left off, and more here.

Improve security posture and efficiently pass audits with new Compliance Visibility Dashboard

Over the years, the Aptible product teams have learned that a vast number of teams would benefit from not just having greater visibility into the security safeguards Aptible has in place across different aspects of the infrastructure, but also get insights to understand what they need to do to further improve their posture to reach a compliance goal.

To help with this, we’re excited to be announcing the newest Aptible feature - the Compliance Visibility Dashboard!

The Compliance Visibility Dashboard provides a unified view of all the technical security controls in place that Aptible fully enforces and manages on your behalf, as well as security configurations you have controls over in the platform.

Think of security controls as safeguards implemented to protect various forms of data and infrastructure, important both for compliance satisfaction as well as best-practice security.

Video explaining how the Dashboard works.

With this feature, you can not only see in detail the many infrastructure security controls Aptible automatically enforces on you behalf, but also get actionable recommendations around safeguards you can configure on the platform (for example, enabling cross-region backup creation) to improve you overall security posture and accelerate compliance with frameworks like HIPAA and HITRUST. Apart from being visualized in the main Aptible Dashboard, these controls along with their descriptions can be exported as a print-friendly PDF for sharing externally with prospects and auditors to gain their trust and confidence faster.

You can access the Compliance Visibility Dashboard by clicking on the Security & Compliance tab in the navigation bar.

Here’s documentation to learn more about using the Dashboard in greater detail.

Users with Read-Only access can no longer see Database credentials

Broadly speaking, two levels of access can be granted to Users through Aptible Roles on a per-Environments basis

Manage Access: Provides Users with full read and write permissions on all resources in a particular Environment.

Read Access: Provides Users with read-only access to all resources in an Environment, including App configuration and Database credentials.

While Users with read access are not allowed to make any changes, or create Ephemeral SSH Sessions or Database Tunnels, they were still able to view credentials of their Aptible-managed Databases. This was possible either through the Database dashboard or through the CLI with the [aptible db:url] and the APTIBLE_OUTPUT_FORMAT=json aptible db:list commands.

For heightened security, Users with read access can no longer see Database Credentials, both in the UI or through the CLI.

Now, when clicking Reveal in the Database dashboard, read access Users will see a pop-up window that does not reveal the connection URL for the said database.

The same is true in the CLI.

When using the aptible db:url HANDLE command in the CLI, Users with read access will see the following message that no longe reveals the Database connection URL.

No default credential for database, valid credential types:

When using the APTIBLE_OUTPUT_FORMAT=json aptible db:list command, read access Users will see empty values for their Database connection URL and credentials.

Note: If your teams have passed the Database connection URL as an environment variable, Users with read access can still read this set configuration.

Manage Log and Metrics Drains, and modify Database Endpoints through the CLI with latest updates

We've released the newest version of the Aptible CLI - v0.19.1 that adds more command line functionality to help you better automate management of Log Drains, Metric Drains and Database Endpoints.

Provisioning and managing Log Drains

Aptible users could provision and manage Log Drains on the dashboard to send their container output, endpoint requests and errors, and SSH session activity to external logging destinations for aggregation, analysis and record keeping.

This new update allows you to also do the same through the CLI.

You can create new Log Drains using the aptible log_drain:create command, with additional options to configure drain destinations and the kind of activity and output you want to capture and send. For example, to create and configure a new Log Drain with Sumologic as the destination, you'd use the following command.

cURLaptible log_drain:create:sumologic HANDLE --url SUMOLOGIC_URL --environment ENVIRONMENT \ [--drain-apps true/false] [--drain_databases true/false] \ [--drain_ephemeral_sessions true/false] [--drain_proxies true/false]

Options: [--url=URL] [--drain-apps], [--no-drain-apps] # Default: true [--drain-databases], [--no-drain-databases] # Default: true [--drain-ephemeral-sessions], [--no-drain-ephemeral-sessions] # Default: true [--drain-proxies], [--no-drain-proxies] # Default: true [--environment=ENVIRONMENT]

Just like with Sumologic, you can provision new Log Drains to Datadog, LogDNA, Papertrail, self-hosted Elasticsearch or to HTTPS and Syslog destinations of your choice.

We've also added supporting features to help your teams see the list of provisioned Log Drains using the aptible log_drain:list command and deprovision any of them with aptible log_drain:deprovision.

Provisioning and managing Metric Drains

Like with Log Drains, you can now add new Metric Drains or manage existing ones through the CLI. Metric Drains allow you to send container performance metrics like disc IOPS, memory and CPU usage to metric aggregators like Datadog for reporting and alerting purposes.

You can create new Metric Drains using the aptible metric_drain:create command. With this, you can send the needed metrics to Datadog, InfluxDB hosted on Aptible, or an InfluxDB hosted anywhere else.

You can also see a list of Metric Drains created in your account using the aptible metric_drain:list command, or deprovision any of them with aptible metric_drain:deprovision .

Managing Database Endpoints

The primary configuration with regards to managing existing Database Endpoints is IP filtering. Just like App Endpoints, Database Endpoints support IP filtering to restrict connections to your database to a set of pre-approved IP addresses. While this was always managed through the UI, the latest CLI update lets you manage IP filters for already provisioned Database Endpoints using the aptible endpoints:database:modify command.

cURLaptible endpoints:database:modify --database DATABASE ENDPOINT_HOSTNAME

Options: [--environment=ENVIRONMENT] [--database=DATABASE] [--ip-whitelist=one two three] # A list of IPv4 sources (addresses or CIDRs) to which to restrict traffic to this Endpoint [--no-ip-whitelist] # Disable IP Whitelist

Download the latest version of the CLI today!

Quickly see who triggered a backup through the Aptible dashboard and CLI

Aptible Deploy has always allowed developers to trigger a backup, something we call a manual backup.

To allow you to quickly see who in your team triggered the database backup and help with any reviews, we've now added a created by field in the Backups tab of your databases in the Aptible dashboard.

You can also see the equivalent of this through the CLI using the aptible backup:list command. Please make sure you're on version 0.18.3 or higher of the CLI.

Log drain improvements for high-performant, reliable delivery to external destinations

Aptible Deploy comes with built-in support for easily aggregating your container, SSH session and HTTP(S) endpoint logs and routing them to your destinations of choice for record-keeping and future analysis, be it in popular external destinations like Datadog, SumoLogic and PaperTrail, or to a self-hosted Elasticsearch database.

Since 2014, Aptible log drains have been used by customers to send hundreds of millions of log lines to various destinations. While the majority of our customers were able to aggregate their logs without hiccup, we also heard a few of them experience issues when the volume of logs being generated were extremely high. These issues ranged from inconvenient delays in receiving logs in their destinations to packet losses during periods of high throughput.

So we decided to fix this by engineering and releasing a new version of Aptible log drains.

What customers can expect with this new version of log drains

The log drains of all Aptible accounts have been updated to the latest version, requiring no additional setup from customers. Customers can expect the following from the latest version.

Improved performance With this update, users can see a noticeable improvement in the reliability and speed of their log drains. Customers may experience minimal to no lag when generating and sending their logs, even at very high volumes due to the work we put in to increase throughput in the new version of our drains.

Better internal observability for faster remediation Using a combination of FluentD data, and visualizing and graphing this data into metrics of importance in Grafana, we’ve been able to set up alerts to monitor for issues based on the the the number of logs waiting to be sent , the number of times customer drains retry sending logs, failed output writes to different destinations, and others. We believe these metrics allow our reliability engineers to quickly identify root-causes, be it on Aptible’s side or the customer's side as issues arise, and remediate them more efficiently.

Over time, we’ll evolve these metrics as we learn how our newest version of log drains performs in a wider variety of real world scenarios. Depending on how well these metrics perform, we may also choose to expose them to customers to enable more proactive, self-service remediation of log drain issues.

Easily modify databases without disruption with new CLI command: ‘aptible db:modify’

We are very excited to introduce a new command for the Aptible CLI : aptible db:modify. This command lets you make modifications to your databases without requiring any restarts.

Currently, the modifications we support are related to your database’s Disk IO performance.

An example of this is moving your database volumes to gp3. You can update your existing gp2 volumes to gp3, which provides a predictable 3,000 IOPS in baseline performance, with the added ability to provision performance independent of storage capacity. Moving to gp3 volumes should result in sizable performance improvements to sustained disk IO for most databases.

Examplesaptible db:modify $DB_HANDLE --volume-type gp3 aptible db:modify $DB_HANDLE --iops 9000 aptible db:modify $DB_HANDLE --volume-type gp3 --iops 9000

Note: Additional database disk I/O operations per second provisioned over the baseline (3000 IOPS) is priced at $0.01/Provisioned IO/Month. See our pricing page to calculate your costs based on your IOPS needs.

You can also specify the volume type and IOPS in other commands as well. For example If you want to convert a volume type and size in just one operation, you can do so in a single db: restart command: