Aptible’s AI Gateway lets you access LLMs from OpenAI, Anthropic, and Amazon Bedrock through a single, compliant API. The gateway is designed for regulated industries; it provides HIPAA compliance with BAA coverage, automatic audit logging, and encryption, so your team can build AI-powered features without managing compliance infrastructure yourself.Documentation Index

Fetch the complete documentation index at: https://www.aptible.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

Sign up for the AI Gateway beta to get started.

Getting Started

Sign up for the beta

Request access to the AI Gateway beta. Once approved, the AI Gateway features will be available at https://app.aptible.com/llm-keys.

Create an environment

LLM keys are scoped to environments, which is where you configure model access policies and monitor costs. You can use an existing environment or create a new one dedicated to your AI workloads.

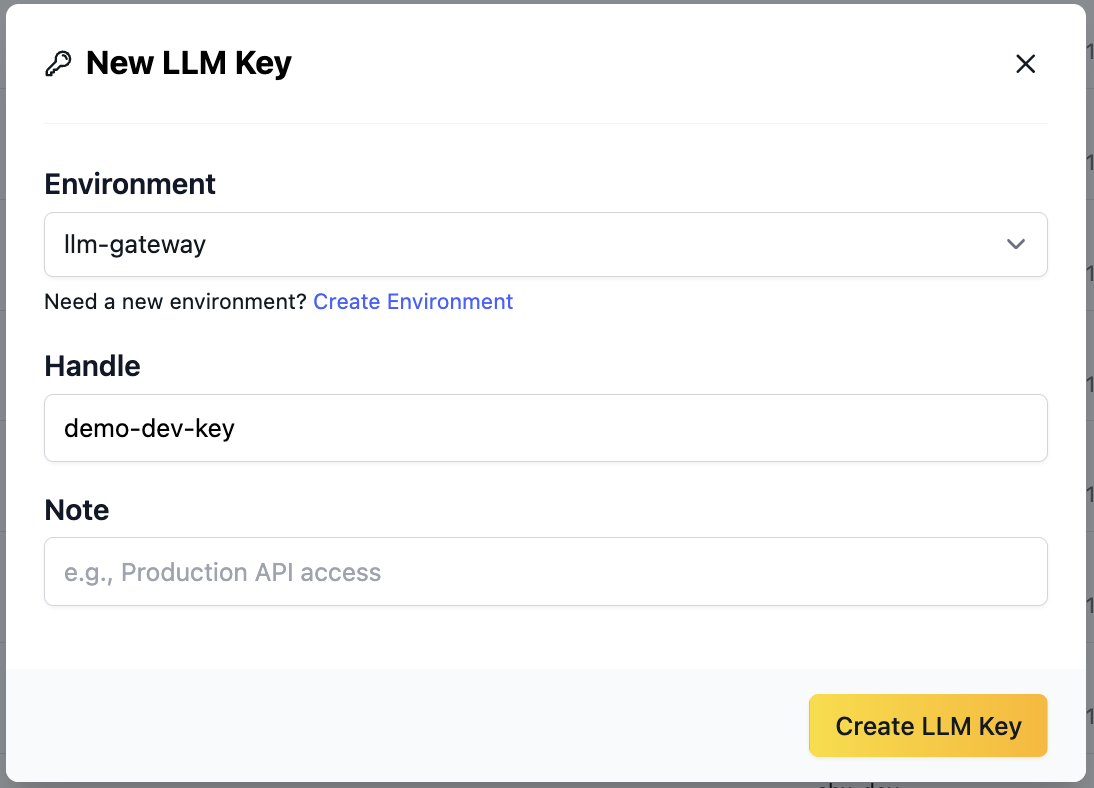

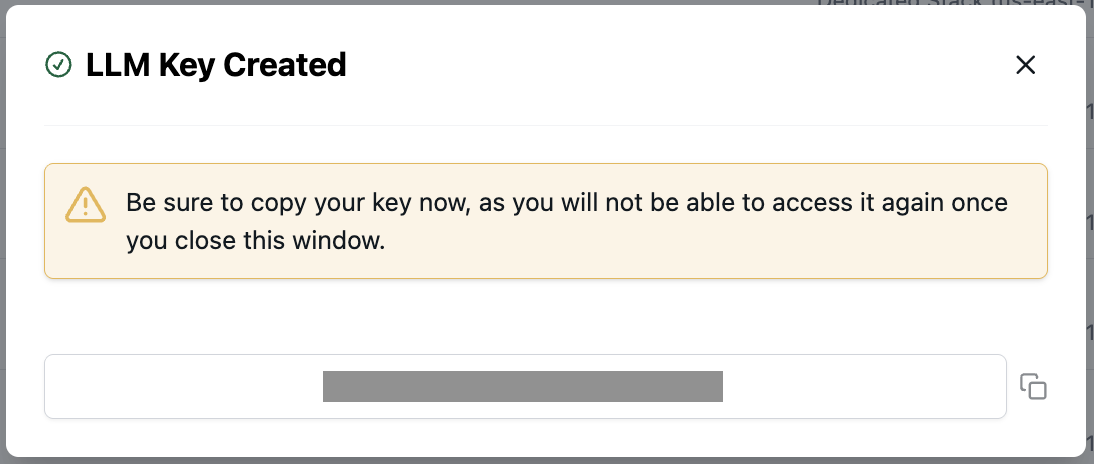

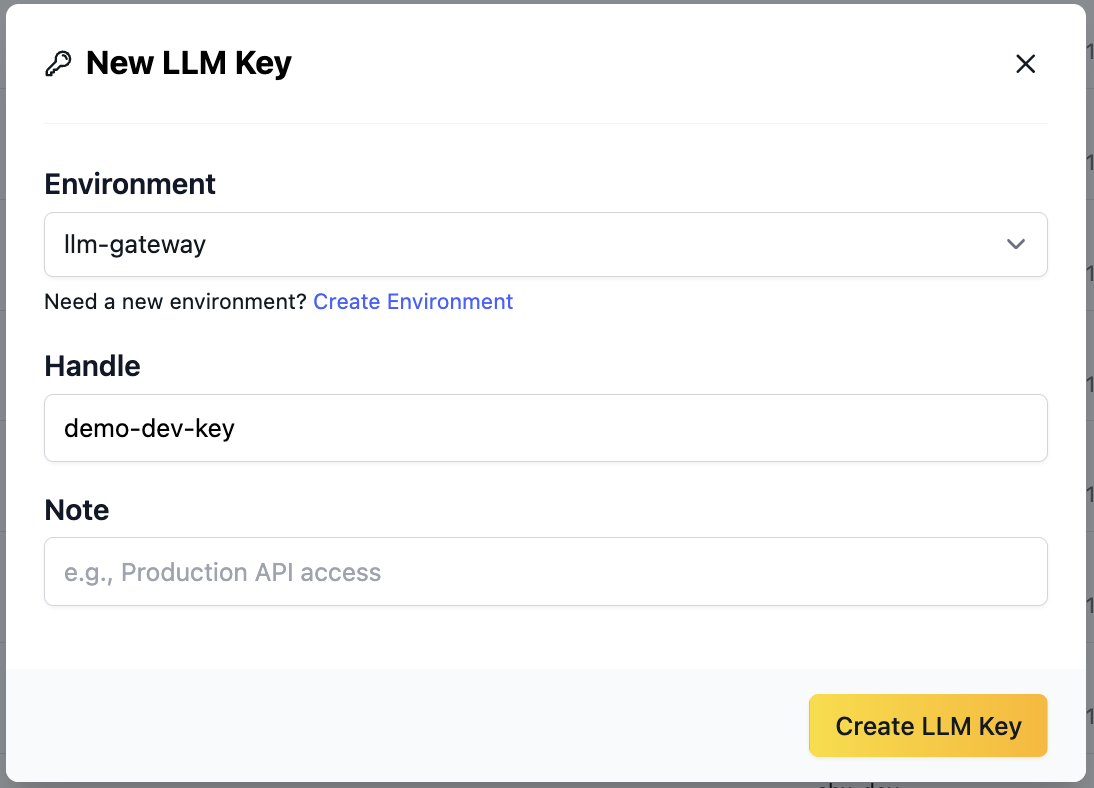

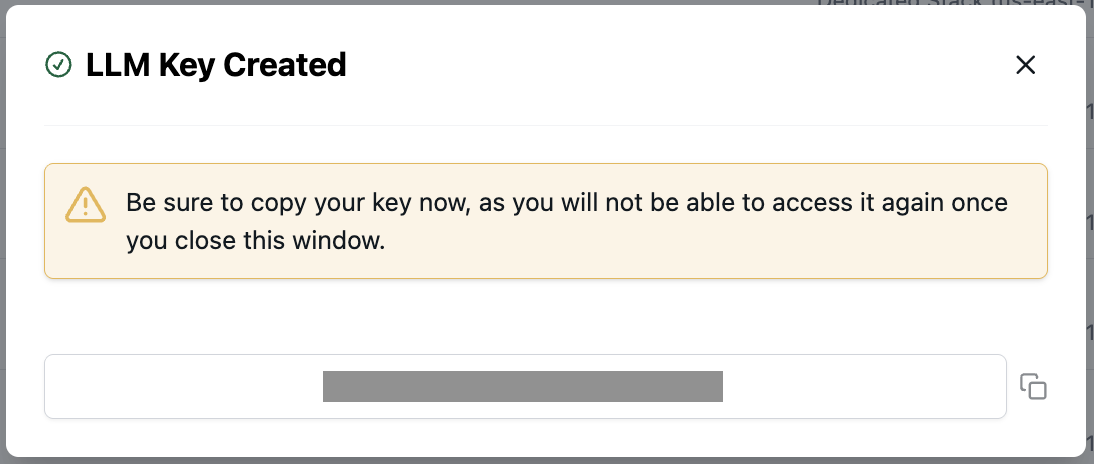

Create an LLM key

Navigate to the AI Gateway section in the Aptible dashboard. You can create keys from:

- The main AI Gateway > LLM Keys page

- The environment details page under AI Gateway > LLM Keys

Connect to the gateway

All requests go through a single endpoint:You can see the available models in the key details page; copy your desired model string and use it wherever you configure your LLM key. Here are instructions for setting up Claude Desktop to use your key, as well as an example of usage with an OpenAI-compatible client.

Supported Models

The AI Gateway supports models from three providers:| Provider | Example Models | Prefix |

|---|---|---|

| Anthropic | Claude Opus, Claude Sonnet | bedrock/anthropic |

| OpenAI | GPT-5.2 | openai/ |

| Amazon Bedrock | Qwen, Llama, and other Bedrock-hosted models | bedrock/ |

Model availability may change during the beta. Check the LLM key details page for the current list of available models.

Features

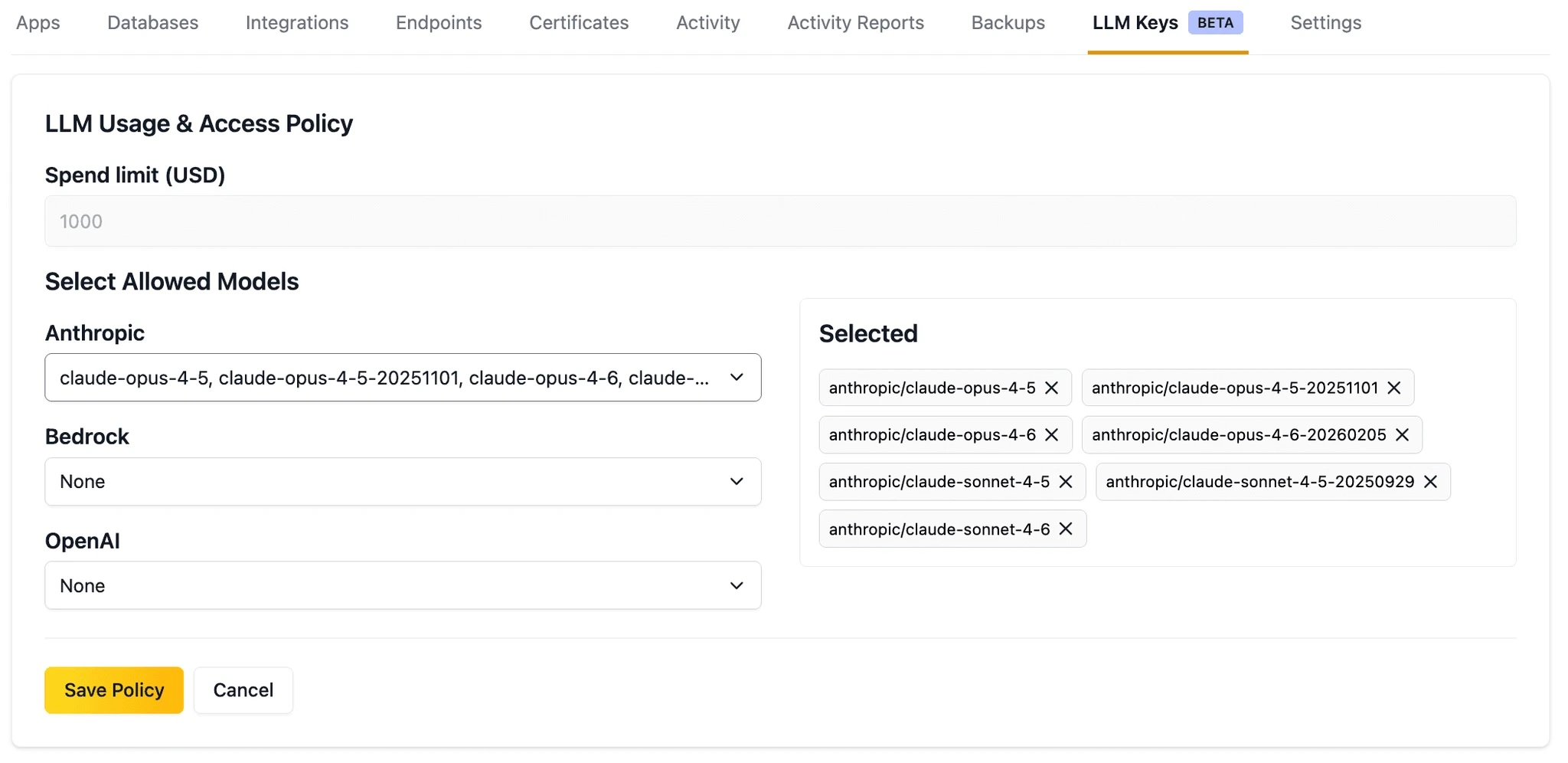

Model Access Policies

Control which models your team can use by configuring model access policies at the environment level. Policies apply to all LLM keys within an environment, giving you centralized control over model usage across your applications and developers.

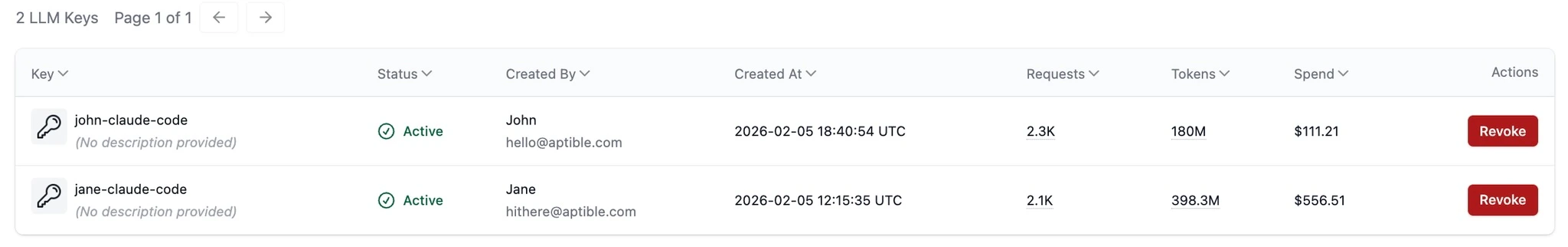

Cost Visibility

Track your LLM spend across your organization:- Organization-level costs — See total AI Gateway spend across all environments for the current billing period.

- Environment-level costs — Break down spend by environment to understand which teams or applications are driving usage.

- Per-key costs — View usage costs for each individual LLM key during the current billing period to identify which keys generate the most activity and find opportunities for cost optimization.

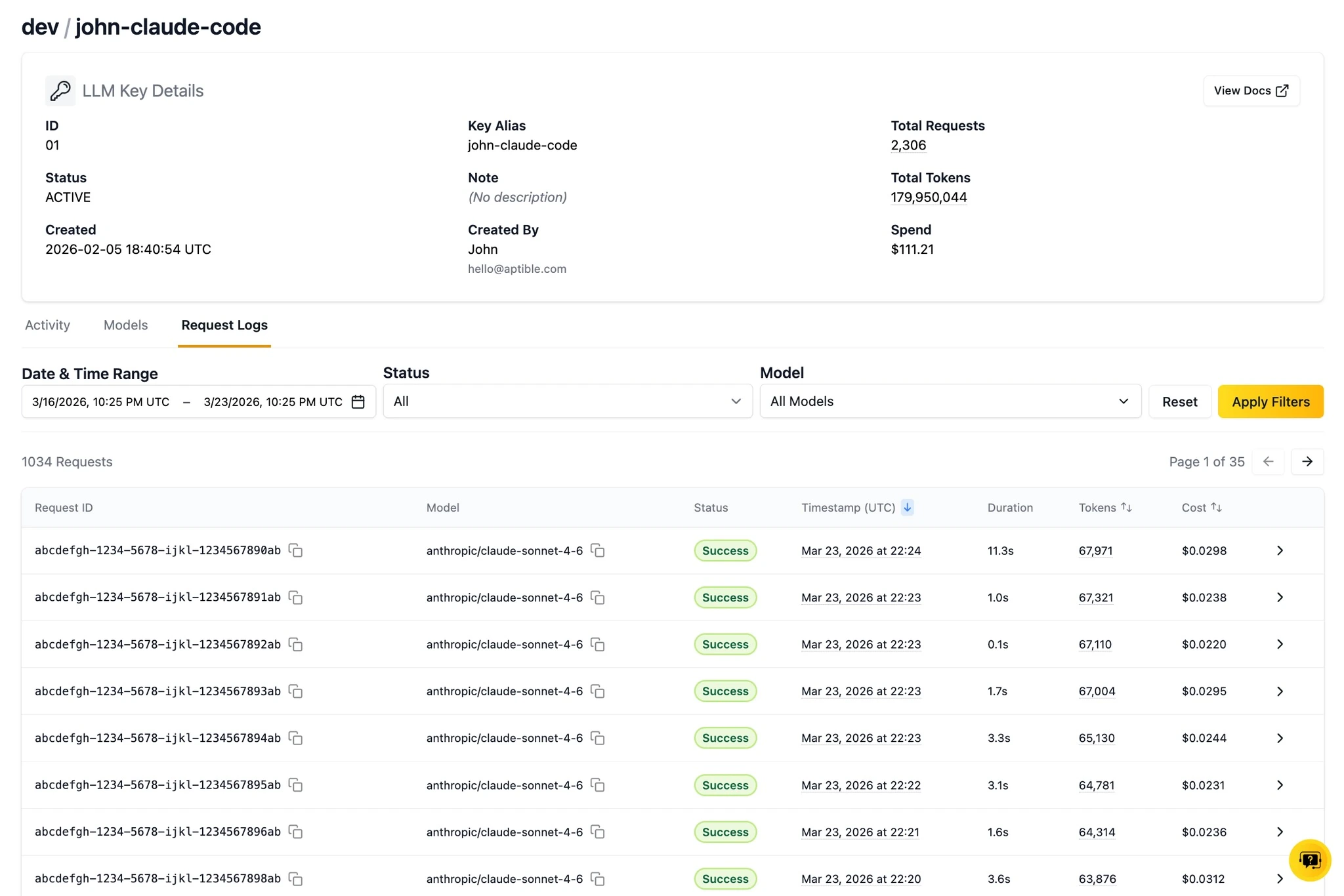

Request History

View request activity for each key over the past week. The key details page shows token consumption and associated costs for each request, giving you visibility into how your keys are being used.

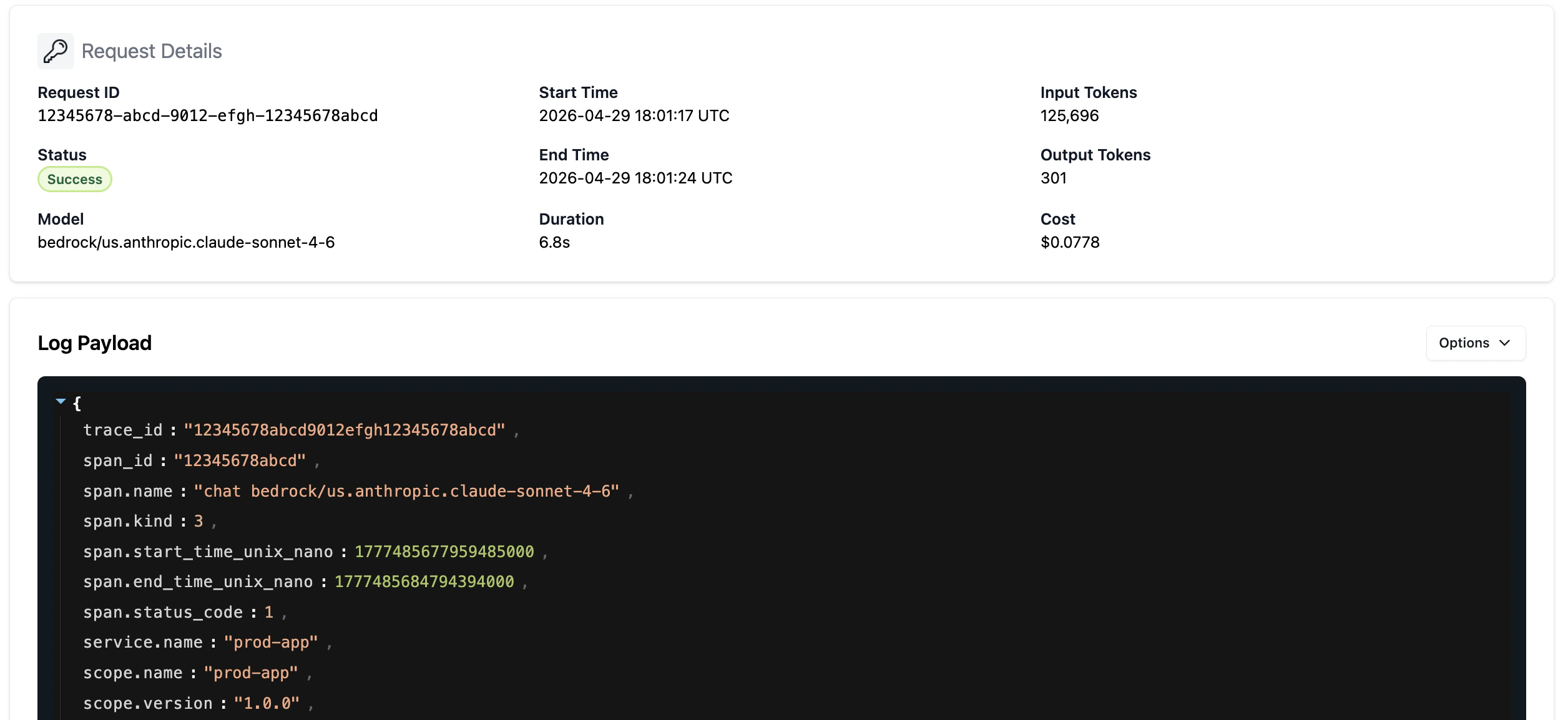

Request Logs

LLM requests and responses are available in the Aptible dashboard to help with prompt refinement and troubleshooting. For every request, you can see the full LLM request and response logs in the UI for 7 days. Click on the chevron in any request row to see the full request details.

Trace Drains to Langfuse

See Langfuse integrationCompliance

The AI Gateway provides HIPAA compliance out of the box:- BAA coverage — Aptible’s BAA covers all models and capabilities accessed through the gateway.

- Audit logging — All LLM calls are automatically logged for compliance and auditing purposes.

- No PHI training — LLM providers are prohibited from retaining or using PHI for model training.

- Encryption — All data is encrypted in transit and at rest.

Coming Soon

We’re actively building new capabilities for the AI Gateway:- Data residency — Deploy the AI Gateway in specific regions to meet local data residency and compliance requirements.